The pitch sounds reasonable: hook the vessel up to Starlink, pipe AI inference to the cloud, done. Every vendor with a cloud AI product makes this case. It's clean, it's simple, and it's completely unworkable in practice.

I've spent the last eight years building AI systems for maritime environments. Before that, I flew naval aircraft where "link drop" meant something very different than a Zoom call stalling. The uncomfortable truth is this: cloud AI assumes connectivity that doesn't exist on the ocean. Not now, not ever, not with any constellation of satellites humanity launches.

Let me walk through why.

The Satellite Bandwidth Myth

Vendors love to point at Starlink Maritime's 350 Mbps peak throughput and call it a day. That's the number on the marketing slide. Here's what actually happens:

Shared bandwidth. That 350 Mbps is the pipe's theoretical maximum, not what's available to your vessel. In a busy shipping lane or port approach, you're sharing that capacity with every other vessel within hundreds of kilometers. Real throughput drops to 50-100 Mbps, sometimes less. During peak hours, I've seen it crater to 20 Mbps on commercial routes.

Metered costs. Maritime satellite isn't like WiFi at a coffee shop. You're paying per gigabyte, and AI inference isn't light traffic. A single computer vision model processing 10 camera feeds at 1080p generates roughly 50-200 GB/day depending on frame rate and model complexity. Multiply by the number of models you need running simultaneously. The math gets ugly fast.

Weather degradation. Ku-band and Ka-band signals attenuate heavily in heavy rain. Tropical storms don't just ground aircraft -- they degrade or completely block satellite links. Your AI system doesn't care that it's raining, but it will care when its inference pipeline stalls because the cloud endpoint timed out.

VSAT (traditional C-band) fares better in weather but delivers 256-512 Kbps. That's not a typo. That's kilobits per second. Try running a modern language model over a 256 Kbps link. I'll wait.

Latency Isn't Just a Speed Problem

Here's what most AI vendors don't understand: latency isn't about how fast data travels. It's about whether your system can function at all when the round-trip time exceeds your operational requirements.

Round-trip reality. Light travels fast, but distance matters. A geostationary satellite sits 35,786 km above the equator. The signal goes up, bounces off a ground station, gets processed, comes back down. Minimum round-trip latency: 600-700 ms. Add network jitter, routing overhead, and cloud processing time, and you're looking at 1.5-3 seconds per request.

Starlink's LEO constellation reduces this to 40-70 ms for the satellite hop, but that's only if you're continuously connected to the same satellite. More on that shortly.

Why latency kills AI. Let's say you're running collision avoidance. The camera sees a container floating off your starboard bow. You send the image to the cloud, cloud runs inference, cloud returns "obstacle, bearing 085, range 200 meters." By the time that returns, you've closed 15-20 meters at 12 knots. At 20 knots, you've closed 25 meters. In heavy traffic, that gap is the difference between a near-miss and a headline.

Computer vision for maritime object detection needs sub-200 ms latency to be operationally meaningful. Cloud can't guarantee that. Ever.

The inference cost of latency. Large language models don't just need bandwidth; they need sustained throughput. A 7-billion parameter model generating a 100-token response needs roughly 2-4 seconds on a decent GPU. That's fine when the link is up and fast. When the link is slow, you're waiting 30 seconds for a response that your crew needed five seconds ago.

What Happens During a Satellite Handover

LEO constellations like Starlink work by bouncing between satellites as they orbit. Your vessel's antenna tracks one satellite, then hands off to the next. This is where things get interesting.

The handover gap. Every 3-7 minutes (depending on orbital geometry), your terminal switches satellites. The actual handover takes 50-200 ms. During that window, your link drops. Packets queue. Some get dropped. TCP connections can hang.

For a human browsing the web, this is a minor blip. For an AI inference pipeline, it's a failure condition. Your model client times out waiting for a response. Your retry logic kicks in. If you're running multiple parallel inference requests (common for multi-camera systems), you get partial failures, race conditions, and corrupted outputs.

Beam switching. LEO satellites use spot beams. As your vessel moves, your terminal switches between beams, sometimes between satellites. Each beam switch is another potential disruption. In high-latitude routes (think North Sea, Baltic winter operations), beam density is lower and handovers are more frequent.

Antenna tracking failure. Maritime antennas have a hard life. They track satellites while the vessel pitches and rolls. In heavy seas, the antenna loses lock. When it reacquires, it's a cold restart of the link. This can happen dozens of times per crossing in North Atlantic conditions.

The operational reality. I've spoken to vessel operators who've installed Starlink Maritime. Their experience: the link works great until it doesn't. And "doesn't" happens at the worst times -- exactly when you need AI assistance most: heavy traffic, reduced visibility, narrow channels.

Data Sovereignty: The Problem Nobody Talks About

Here's a problem that doesn't show up in any bandwidth specification but matters enormously to vessel operators:

Your operational data leaves the vessel. Every inference request to the cloud includes imagery, sensor data, vessel position, and operational context. You're transmitting what amounts to a detailed technical picture of your vessel's systems, navigation patterns, and cargo to a cloud server operated by a US company (or Chinese, or European, depending on the provider).

Regulatory exposure. If you're a US-flagged vessel, you're subject to CTPAT and MTSA requirements around data handling. If you're EU-flagged, GDPR applies even to data processed outside the EU in certain contexts. If you're carrying hazmat or operating in sensitive waters, your data trail is a concern.

Competitor exposure. Your inference data tells a cloud provider exactly what kind of operations you run, when you run them, and how your systems perform. That's commercially sensitive information. Does your AI vendor's Terms of Service actually prohibit them from using that data to improve their models or share with competitors? Have you read it?

The "we don't see your data" myth. Most cloud AI providers claim they don't retain inference data. That's technically true at the application layer. But the network traffic is visible to the satellite provider, to ground station operators, to anyone with access to the network infrastructure. Your inference patterns are metadata, and metadata tells a story.

On-premise AI keeps all of this inside the vessel's own compute infrastructure. The data never leaves the ship. That's not a feature request; it's a fundamental architectural difference.

Reliability: The Single Point of Failure

Every cloud AI system introduces a dependency chain:

- Satellite link → ground station → internet backbone → cloud API → inference server → response

Break any link in that chain, and your AI stops working. At sea, the weakest link is the first one.

Redundancy isn't the answer. You can install dual VSAT and dual Starlink terminals. That's better reliability, but now you have two satellite links to manage, two bills to pay, and still no guarantee of uptime when you need it.

Graceful degradation. The real question isn't "how reliable is my link?" It's "what does my AI system do when the link fails?" Cloud AI vendors don't like this question, because the answer is: it stops. Full stop. Your collision avoidance, your crew assistance, your watchstanding support -- all of it goes dark.

On-premise AI doesn't have this problem. If the inference runs locally, the satellite link is irrelevant. Your system keeps working whether the link is up or down. That's not a feature; it's the only acceptable architecture for maritime operations.

Why Edge AI Is the Only Solution

Let me be direct: there's no amount of satellite bandwidth that makes cloud AI viable for mission-critical maritime operations. Not at any price point. Not with any technology on the roadmap.

The fundamental problem isn't bandwidth or latency numbers. It's architectural. Cloud AI assumes a world where connectivity is abundant, cheap, and reliable. That world doesn't exist at sea. It never will.

What works instead: sovereign on-premise inference. Compute that lives on the vessel, runs the models locally, and produces outputs without requiring any external connection.

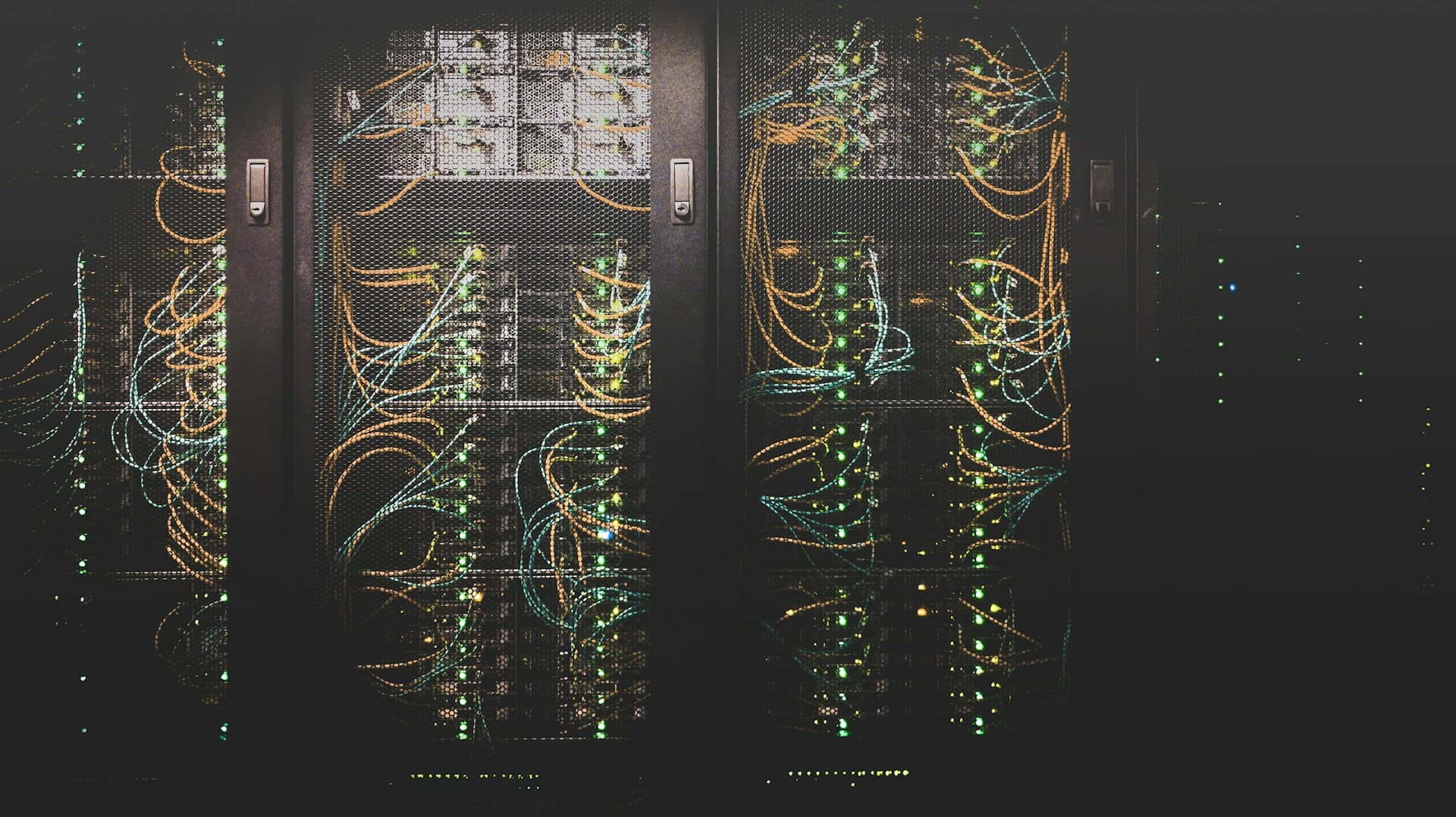

This is what we build at ShipboardAI. Our systems run full inference pipelines -- computer vision, language models, decision support -- entirely on the vessel. No cloud dependency. No latency concerns. No data sovereignty issues.

The numbers work. A modern GPU blade (think NVIDIA L40S or equivalent) fits in a standard 19-inch rack mount. It draws under 500W. It processes 10+ simultaneous video streams and runs a 7-billion parameter language model concurrently. Total power consumption: under 2 kW with cooling. For a vessel that runs auxiliary generators 24/7, this is noise.

The operational model is different. Rather than paying per inference or per gigabyte, you own the compute. Capital expense, one time. The system works from day one and keeps working forever. Updates happen in port, over a local network, when you have bandwidth to spare.

It actually gets better over time. Because your inference runs locally, you can collect operational data onboard, fine-tune models to your specific routes and vessel types, and deploy improvements without asking anyone's permission. Your AI gets more capable the longer you run it.

The Bottom Line

Cloud AI for maritime is a solution looking for a problem. It works fine in environments with reliable connectivity. The ocean isn't one of them.

If you're evaluating AI systems for a vessel -- commercial, yacht, or government -- ask the hard questions upfront:

- What happens when the satellite link drops?

- Where does my operational data go?

- What's the actual latency under real-world conditions?

- What happens during handover events?

If the answer involves the cloud, you have the wrong system.

We build on-premise AI for vessels that can't afford to have their AI go dark. If you're running operations where AI matters, let's talk.

Contact ShipboardAI -- we can walk through what sovereign inference looks like for your fleet.